Rethinking Risk Assessment: A Systemic Approach to Risk Measurement

Company management, understood as the balanced governance of the relationship between risk and return, is not a new topic, although it has evolved dramatically over time. While from a traditional perspective, this relationship was mainly interpreted in economic and financial terms, with the development of corporate governance models, the concept of return has gradually broadened to encompass a company’s ability to generate sustainable value for a wide range of stakeholders. This is the direction, for example, of integrated reporting frameworks, as well as the most recent advances in ESG performance measurement. At the same time, sensitivity to risk has also grown enormously in the business world. We are seeing the intensifying dynamism of the economic, social, and competitive environment on the one hand, and the emergence of new risk factors on the other (such as cyber security issues). These phenomena are directing increasing attention to any scenarios that could jeopardize business continuity. Hence the need to introduce systems, processes, tools, and mechanisms that can help companies anticipate, monitor, and manage the elements that may generate uncertainty in business operations. In this context, it is essential to conceive risk as a broad, cross-cutting dimension.

A systemic interpretation of business risk

In 2012, Kaplan and Mikes proposed a classification of business risks consistent with a systemic interpretation. In this framework, the authors distinguish between preventable risks, strategic risks, and external risks. Preventable risks refer to internal episodes, often linked to people behaving in such a way that they may (consciously or unconsciously) disrupt the smooth functioning of the company; since these risks are associated with internal processes, they are generally considered controllable. Strategic risks, by contrast, arise from choices that underpin the company’s strategy. Although they originate internally and can therefore be assessed ex ante, they are not necessarily controllable once the choice is made. In fact, the degree of controllability depends on the level of reversibility of the decision. Finally, external risks stem from exogenous factors that are uncontrollable; the probability that they will occur varies, but if they do the company’s prospects for survival would be seriously threatened. In recent years, marked by exceptional events such as the pandemic, the energy crisis, and heightened geopolitical conflict, companies are more acutely aware of this category of risk than ever before, and are striving to address it.

This classification of risks, despite its limitations, serves to highlight the need for differentiated intervention strategies. For the first type, we can envisage a coordinated and coherent system of interventions, such as building a solid corporate governance structure, designing an internal regulatory system, establishing a set of rules and procedures, and formalizing audit processes and control systems. For the second and third types, instead, potential initiatives might entail introducing simulation and forecasting systems, developing diagnostic tools capable of singling out potential emerging risks on the basis of weak signals, devising risk mitigation policies (ranging from insurance instruments to alternative courses of action for particularly risky options), and formulating emergency risk management plans in case the need arises.

In practice, while linking specific risk to specific intervention may be useful from a theoretical standpoint, putting this into practice is not always straightforward. Experience suggests that only a combination of organizational, operational, and informational initiatives can adequately cover business risks, regardless of their specific nature. From this perspective, balanced risk management is primarily a cultural issue. Indeed, by disseminating a corporate culture that is sensitive to risk management, a company can overcome certain recurring pitfalls. According to the authors cited above, these involve elements such as individual cognitive biases (which may lead, for example, to behavior driven by overconfidence), excessive functional focus (which results in fragmenting risks and losing sight of the big picture), and resistance to change when improved risk management calls for modifying established habits and practices.

Since a company’s overall risk–return profile is nothing more than the aggregate outcome of choices made across different decision-making areas, performance measurement systems play a crucial role in aligning managerial processes and decision-making with the risk–return objectives promised to stakeholders (Damodaran, 2007). However, despite the long-standing tradition of using performance metrics in corporate reporting, the same cannot be said for risk metrics. Reporting packages, in fact, rarely include measures explicitly derived from a systematic and continuous assessment of corporate risks.

This asymmetry is particularly striking in comparison to purely financial decision-making. When building securities portfolios, for example, decisions paradoxically begin with risk profiling and subsequently identify optimal investments from a return perspective. In stark contrast, in business decisions the risk dimension is often absent (apart from certain investment choices). While this shortcoming may not be particularly problematic in periods of high stability and strategic or organizational continuity, it is critical under highly discontinuous or difficult-to-predict conditions. In these circumstances, focusing exclusively on returns may lead to suboptimal—if not dysfunctional—decisions. In light of all this, in the current business environment, with its dramatic discontinuity, companies must tackle the urgent task of devising risk monitoring systems. But before adopting this new perspective on risk measurement, they have two choices to make:

- Delineating the scope of application of risk measurement, acknowledging that the costs of a measurement system are justifiable only in areas of activity where risks are material for the company.

- Deciding which risks to monitor and control; risk is a broad, blurry concept, so the factors that determine and influence it must be meticulously identified.

Measuring risks: The scope of application

Business risks arise from choices made when organizing and managing operational processes. In this sense, the object of risk measurement can only be the process itself. This approach is supported by a substantial body of literature on compliance decisions (Benedek and Bognár, 2024; Mustapha et al., 2020) and auditing frameworks, which consistently recognize processes as the primary units of analysis and control. So, it is no coincidence that the principles, rules, and procedures developed to mitigate business risk refer to operational processes and to the behavioral norms that should govern them. Ultimately, such risks are defined either by the behaviors embedded in business processes or events that may compromise the proper functioning of said processes.

The risks arising from reckless or dysfunctional behavior can be contained by promoting and enforcing compliance with rules and best practices, an approach that underpins the principles and tools of internal auditing. However, with regard to risks associated with occurrences that could undermine business continuity, these are not necessarily mitigated by behavioral rules alone. Instead, such contingencies call for measurement systems designed to detect potential warning signs, preferably at an early stage. This enables the company to devise appropriate mitigation policies and actions when feasible, or at least to recognize the combined risk-return implications that a given business event may entail. This risk measurement approach is also fully consistent with another major challenge for performance measurement systems, namely monitoring ESG initiatives. The double materiality approach, in fact, focuses on identifying and quantifying external incidents related to material issues that may affect business performance in the short, medium, and long term (Nielsen, 2023).

At the same time, constructing a comprehensive metric system covering all corporate processes would be extremely costly and potentially ineffective, especially when the objective is to foster a risk-aware culture, which by nature must follow a gradual implementation path. This consideration highlights the need to pinpoint the most critical processes where monitoring efforts should be concentrated. To do so, it may be useful to draw on the extensive literature and the various methodologies for classifying business processes (Dossi, 2001).

In relation to this, two meaningful criteria are economic relevance and strategic significance. Economic relevance refers to the volume and value of the resources absorbed by the process in question. From a cost perspective, it is clearly essential to prioritize processes that absorb the most resources, whether directly (production factors used) or indirectly (production factors coordinated or managed). The first dimension of risk therefore concerns the resources utilized by the company; relevant issues may be unavailability, inadequacy, or excessive cost. This type of risk is particularly salient in areas of activity that necessitate the use of multiple production factors, which often have different technical characteristics and suppliers, and are utilized in substantial quantities. By contrast, processes that involve a limited number of factors in small quantities are, with rare exceptions, easier to manage from a risk containment perspective.

Strategic significance, in turn, relates to the impact that each process has on the defensibility of the company’s business model; more specifically, we are referring to the robustness of the company’s revenue model. From this standpoint as well, monitoring efforts should focus on processes that have a direct or indirect bearing on the firm’s ability to generate and sustain value over time. Therefore, a second dimension of risk concerns the capacity to ensure the continuity of the business model while preserving its distinctiveness in the short and medium-to-long term. Since these areas of activity, if exposed to adverse external events, could threaten the company’s sustainability, they must occupy a central position within the measurement system. Conversely, support processes that do not exert a direct or indirect influence on the firm’s competitive positioning in its target markets, however economically relevant they may be, are less material from a strategic perspective, so they can be addressed as a secondary priority.

In summary, an effective risk measurement system should initially focus on processes with high economic relevance and strategic significance. Inadequate intervention in these areas may simultaneously affect both the cost structure and the revenue-generating capacity of the company or its individual business units. Concentrating measurement efforts on these processes also helps to ensure that managerial attention is appropriately directed, thereby reducing the “noise” effect (Kahneman et al., 2021) that often characterizes diagnostic systems, particularly when new measurement methods are introduced.

Measuring risks: Choosing the types of risk

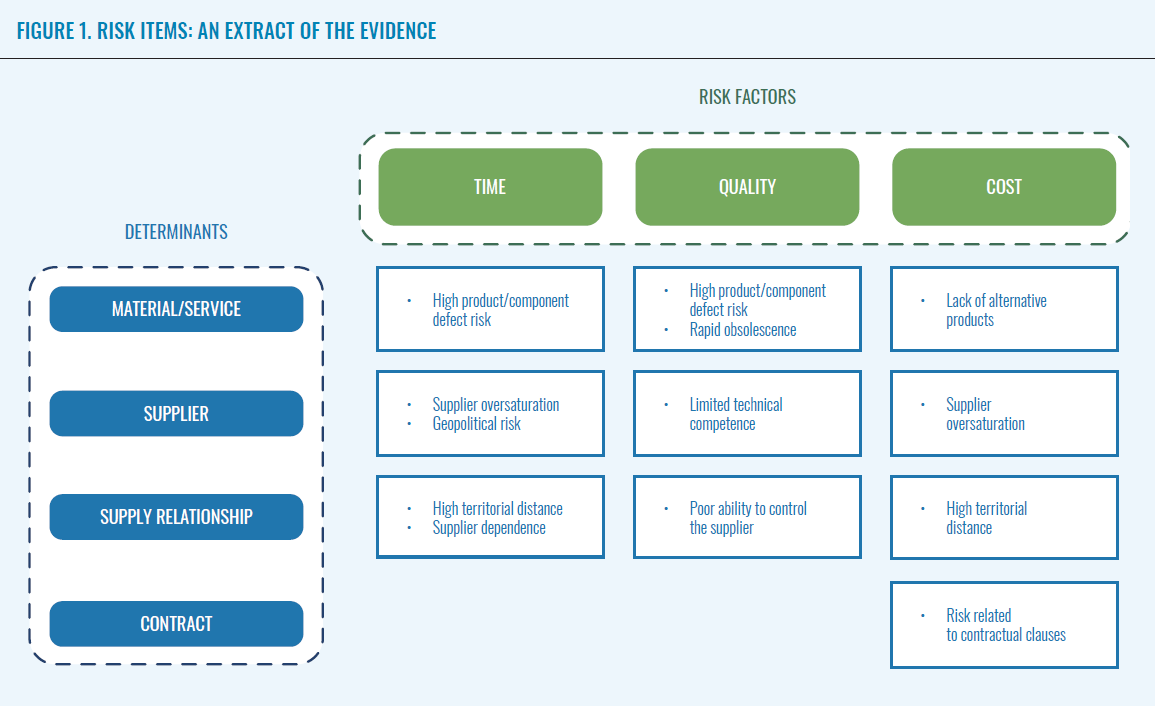

After defining the scope of application, the next mandatory step in building a business risk measurement model is to identify two fundamental elements: risk factors and risk determinants.

Risk factors are aspects that qualify the risk of a business process. But the term “risk” is, in fact, inherently generic, as it represents a measurement perspective yet does not specify how dangers that threaten business activities might manifest. These manifestations must be clearly discerned to determine what metrics to apply to monitor each risk. To this end, it may be useful to refer to what the literature describes as process performance. In other words, risk can be understood as the set of events that may potentially compromise performance. From this standpoint, traditional process performance indicators draw a distinction between cost, time, and quality performance. A process can be considered adequate when it ensures conditions of efficiency (cost), timeliness (time), and effectiveness (quality) that are consistent with corporate strategies (Dossi, 2001), if not with the minimum operating constraints of the company. Process risk therefore is the possibility that the company may incur additional costs that are unplanned or inadequately assessed, or experience delays resulting from missed deadlines (with consequent disruptions in more complex execution processes) and/or fail to meet the quality standards pledged by the company or expected by internal and external customers, leading to potential malfunctions or inefficiencies.

Risk determinants, by contrast, are the qualifying elements of the process which, if not properly monitored, may affect cost, time, and quality performance. More specifically, we are referring to structural and operational characteristics that affect the smooth functioning of business processes. If these factors are not properly anticipated and managed, they can generate the negative impacts on process performance, as outlined above.

The intersection between risk factors and risk determinants provides a useful reference framework for identifying specific events that generate process risk and, consequently, business risk. An event is defined here as a characteristic or occurrence that may potentially affect the company’s operations. In particular, this refers to circumstances that lie outside the company’s direct control, or over which it has only limited influence, and which therefore require the adoption of appropriate mitigation tools. This category includes, for example, certain structural characteristics of the production factors employed in business activities, volatility related to their procurement or use, as well as specific conditions of the reference market which can be highly unpredictable. These are precisely the types of situations that a risk measurement system must be able to detect, and identifying and recognizing these events represents a crucial step in building one. Without a clear focus on these elements, the system would be in danger of becoming chaotic, inconsistent, and incoherent.

This step, however, is only preliminary. In fact, it serves as the basis for a more structured system design process, which can be articulated into four distinct phases:

- Identifying reliable metrics for each type of event/characteristic: This represents the key decision in building the measurement system, as it involves selecting quantitative parameters that objectively capture the dimensions that may exacerbate the risk profile associated with a specific episode. In this phase, feasibility and data reliability issues often arise. Given the relative novelty of risk-oriented measurement systems, consistent and structured information is not always available in real world scenarios. In some cases, proxy measures may be adopted; in others, quantitative measures can be replaced by qualitative judgments, but this would have clear limitations in terms of subjectivity and could hinder the development of a robust measurement system.

- Setting a target for each metric that corresponds to an acceptable level of risk: This choice is also particularly critical. Thanks to the widespread use of performance measures, it is relatively straightforward to find benchmarks for performance-related dimensions, supported by established processes such as planning, budgeting, and forecasting. By contrast, similar familiarity is often lacking when it comes to appropriate risk profiles (as discussed earlier in this article with reference to the risk–return balance). Historical data collection may provide a useful starting point, but meaningful progress occurs only when risk becomes a central perspective in all targeting and forecasting processes.

- Translating the distance from the target into a risk metric: From an implementation standpoint, this is basically a technical step. Given an ideal risk profile associated with each metric, the level of risk linked to a specific event or characteristic is determined by the distance between the observed value and the target value.

- Synthesizing specific risks into aggregated measures that can used to monitor complex phenomena: To avoid an excessive proliferation of indicators, individual parameters should be aggregated into higher-level risk measures. By compiling key synthetic risk indicators for each risk determinant (conceptually analogous to synthetic indicators used in economic and financial performance measurement), we can enhance the diagnostic capacity of the system, ensuring appropriate managerial orientation and focus. These indicators can be obtained by using aggregation, simple averages, or weighted averages of the elements that collectively define the risk in question.

Each of the steps outlined above, which entail specific implementation challenges, may not be immediately intuitive. So, a real-life example may be useful to clarify how this procedure would be implemented in a company. The ELT case represents a concrete application of the risk measurement system, illustrating both how to define the scope of application of the model and how to apply it in practice, from establishing the measurement framework to building key synthetic risk indicators. In addition, the case serves to highlight the difficulties that come up during implementation and the most common mistakes to avoid, offering practical guidance for the effective adoption of the model.[1]

Designing a process risk measurement system: the ELT case

Founded in Rome in 1951, today the ELT Group is one of the leading international players in the electronic defense and security sector. Building on experience gained from participating in major European defense programs – including Eurofighter Typhoon, FREMM, and NH90 – the Group now operates in more than thirty countries worldwide, supplying over 3,000 systems. ELT is also a founding member of the GCAP Electronic Evolution consortium in the sixth-generation Global Combat Air Program (GCAP).

ELT maintains a presence in eleven countries across four continents with a network of sales offices (in Egypt, India, Pakistan, Qatar, Germany, and Belgium), operational branches (in United Arab Emirates, Singapore, and the Kingdom of Saudi Arabia), and foreign subsidiaries located in the Kingdom of Saudi Arabia, Germany, and the United States. Thanks to innovative management of the electromagnetic spectrum, enabled by integrated proprietary technologies, the ELT Group has evolved into an international, multi-domain organization, extending its activities beyond traditional electronic defense to include cyber, space, and biodefense.

Subsidiaries of the Group are CY4GATE, specializing in cybersecurity and intelligence, and E4LIFE, focused on biodefense, as well as ELT GmbH, a German company dedicated to testing and validation activities, ELTHUB, a hub specializing in space and electro-optics, and Solynx, a US-based procurement company. The breadth and complexity of the Group’s competencies reflect a diversification strategy aimed at expanding its presence well beyond the boundaries of traditional defense, in line with its mission to protect people, data, and assets.

In recent years, the growing demand for technological solutions capable of supporting a multi-domain approach, together with the proliferation of hybrid conflicts, has positioned the company as a key player in the development of sovereign technologies that can tackle emerging challenges. At the same time, the multiplication of growth opportunities has prompted the Group to adopt increasingly sophisticated risk management practices in order to respond effectively to intensified technological competition as well as supply chain vulnerabilities, while ensuring long-term robustness and resilience.

Choosing the process

When selecting the process to prioritize in devising a risk monitoring system, it is essential to understand the company’s organizational model. This model is structured around programs, which are understood as operational units consisting of contractual commitments to customers that are fulfilled with the delivery of the product or solution. Each program may include one or more products, depending on the specifications stipulated in the contract. Within this framework, program execution relies on a set of core processes and support processes. Among these, ELT’s engineering-related processes – particularly those associated with the design phase – play a central role, supported by managerial and operational functions such as Supply Chain and Customer Support.

In line with the criteria of economic relevance and strategic significance, and in consideration of the company’s business model, the risk measurement initiative deployed by ELT centered on the supply chain process. This is vital not only due to the economic relevance of the resources directly employed, but more importantly because of indirectly managed resources. The supply chain process governs the acquisition and coordination of the company’s core assets and services, thereby directly affecting program execution, competitiveness, and the fulfillment of contractual commitments, including delivery terms and deadlines. This means that from the combined perspectives of economic relevance and strategic significance, the supply chain emerges as one of the most critical processes, influencing both the cost structure (consider, for example, the penalties associated with contractual delays in a sector as sensitive as national defense) and the overall defensibility of the business model.

It is worth noting that the analysis was not limited to the supply chain in a narrow sense, understood solely as a set of purchasing activities. Rather, the process was examined from a broader perspective, spanning the contracting phase through to post-delivery activities. This wider scope was necessary to reveal the dynamics that most frequently trigger serious risks, and to highlight the interdependencies among organizational areas that, while not directly involved in procurement, exert a substantial influence on its outcomes.

Constructing the process risk measurement system

The goal of the project was to develop a risk measurement system for ELT Group’s supply chain; this endeavor was articulated into a sequence of distinct phases, each contributing to progressively finetune the model. In particular, the process involved alternating between the main phases outlined in the introductory section of this article, and moments of reflection that managers needed as they applied the proposed framework to their business practices. This iterative approach allowed for continuous adjustments as the model was translated into operational terms. The phases of the project can be summarized as follows:

- Delineating the scope of the system

- Defining risk determinants and risk factors

- Detecting risk items

- Operationalizing the framework (from identifying metrics to rationalizing algorithms)

- Testing and validating the model

The scope of application

The first step, delineating the scope of application of the system, is an essential preliminary phase intended to guide the subsequent stages of the project in a direction that would maximize the effectiveness of the tool for the supply chain function. The scope was defined by considering three distinct levels of analysis: the activities involved, the time horizon for risk detection, and the attributes of the procured goods and services to include in the system.

With regard to procurement activities, to reveal related risk, the choice lay between a restricted approach (limited exclusively to procurement activity and all upstream phases) or an extended approach, which would also include post-procurement phases that may represent notable sources of risk. Consider, for example, the risks that can emerge after delivery, such as customer warranty obligations; these should ideally be identified as early as the procurement stage. The decision for ELT was to adopt an extended scope, encompassing risks that emerge during negotiations as well as after sales are finalized. Management was fully aware that this approach would require greater effort in terms of system population and maintenance, but by the same token it would intercept the widest possible range of risk derivatives and cover the scope of the system as comprehensively as possible.

Similarly, attention was devoted to finding the most appropriate risk assessment touchpoints and determining the stages of the program lifecycle where risk detection should focus. These efforts led the working group to favor a continuous monitoring approach, aimed at systematically spotting risk elements throughout the entire program development process. In this way, the function could maintain a constantly updated view of the risks under analysis, even beyond the formal conclusion of the program lifecycle, with an eye to identifying and tracking all risk factors up to the final stages of post-delivery support.

Finally, the last consideration regarding the scope of application concerned the attributes of the inputs to include in the system. In particular, the working group weighed the benefits of excluding certain categories of goods (such as indirect or intra-group purchases) to make the analysis more efficient and to focus attention on areas of greatest relevance for the supply chain function. After assessing the historical value of inputs and their intrinsic characteristics, the decision was made to omit some purchased production factors that had low risk profiles or were more complex to monitor. This move helped avoid excessive informational overload and concentrate measurement efforts on spheres of genuine materiality for the risk measurement system.

Determinants and risk factors

Once the scope of the analysis is established, the next step is to identify risk determinants and risk factors. As discussed in the introduction of this article, risk determinants refer to the structural and operational elements of processes which, if not adequately monitored, may give rise to serious issues, whereas risk factors correspond to the dimensions in which such issues manifest. This phase is particularly critical, as the choices made here shape the overall architecture of the risk measurement system.

As for risk factors, after some discussion the ELT working group decided to classify them into three main categories, which are also clearly observable in the specific context of the procurement process:

- Time, referring to delays and procurement lead times.

- Quality, relating to the performance levels of the procured goods or services.

- Cost, associated with cost increases resulting from process inefficiencies or disruptions.

With respect to risk determinants, the analysis identified the following key elements:

- The supplier, as the counterparty who ensures that goods and services are available. The characteristics and operating conditions of this company directly affect the stability and resilience of the entire supply chain.

- The supply relationship, i.e., the degree of dependence, contractual flexibility, and capacity for collaboration. Fragile or unbalanced relationships exacerbate process vulnerability.

- The material or service supplied; relative attributes (such as criticality, complexity, and substitutability) influence the firm’s ability to ensure operational continuity in case of disruptions.

- The contract, a source of constraints and commitments that directly shape procurement decisions.

Identifying risk items

At the intersection of risk determinants and risk factors, risk items emerge; these are understood as specific events that generate process risk and, consequently, business risk. Each risk item corresponds to a concrete manifestation of criticality arising from a given determinant and associated with an impact on one of the three dimensions listed above. Identifying these risk items is a fundamental step that allows the company to translate the variety of risk situations it is exposed to its day-to-day operations into concrete terms. This makes risk measurable, monitorable, and manageable.

To prevent the mapping activity from generating an excessive or inconsistent set of risk items, the ELT team had to introduce a series of logical and operational constraints, conceived as explicit selection criteria. From a logical standpoint, the first constraint was causality, that is, the need to focus on the determinants of systemic situations rather than on incidents caused by isolated or random human errors. This was complemented by the criterion of governability, which meant directing attention only toward episodes that were predictable, mitigable, and manageable at the organizational level, regardless of whether the supply chain function could intervene directly. Finally, the third logical constraint was objectivity, intended to prioritize occurrences that allow for clear triangulation of risk factors, determinants, and detection objects.

From an operational perspective, the work was guided by two practical criteria. The first was timeliness. Specifically, the indicators associated with the events in question had to be available when it was still possible to anticipate the risk, before it could materialize. The second was data quality, to ensure that the system relied on accurate and consistent information, thereby minimizing the risk of informing strategic decisions with distorted or incomplete evidence.

Taken together, these constraints played a crucial role in keeping the system streamlined, coherent, and purpose driven. In addition, they helped prevent the model from generating an excessive proliferation of risks and metrics, which would be difficult to interpret and operationalize. (Figure 1 provides illustrative examples of selected risk items.[2]).

Operationalizing the framework

Once the set of risk items is determined, the most intensive and vital phase of the entire process begins: translating the framework into operational terms. At this point, metrics and calculation algorithms are described in detail that will serve to assess the analytical risk associated with each individual item, together with the aggregation approach to estimating the overall systemic risk of the procurement process.

To carry out risk assessment at the individual item level, the first step is to ascertain the most appropriate metric to capture the magnitude of each risk, as well as the information sources that are needed to apply the selected calculation algorithms. This represents a pivotal moment, as it constitutes a concrete test of the theoretical feasibility of the dataset initially envisaged, ensuring that the final tool is both efficient and effective. Risk metrics may draw on a variety of information sources, which broadly speaking are either internal, such as data already available in corporate information systems on supplier characteristics or on the procured goods and services, or external, including ratings provided by specialized organizations assessing territorial, geopolitical, or regulatory risks. (Table 1 reports examples of the risk measures adopted by ELT.[3])

The primary objective of this phase is to evaluate each risk element while maintaining a strong focus on measurement reliability. From this perspective, it is preferable to begin with a limited set of metrics that are robust and truly representative of the underlying risk, rather than a broader but internally inconsistent group of indicators. This approach makes it possible to establish a solid, credible foundation for risk estimation, both at the level of individual risk items and at the aggregate, systemic level.

Table 1 Risk metrics: An extract of the evidence

|

No. |

Risk item |

Determinant |

Internal/external source |

Risk metric |

|

1 |

Hydrogeological/climatic risk |

Supplier |

External |

Climate Risk Index |

|

2 |

Economic and financial risk |

Supplier |

External |

CBI risk rating |

|

3 |

Geopolitical risk |

Supplier |

External |

GP score |

|

4 |

Technical competence risk |

External |

Internal |

Supplier competence risk index |

|

5 |

Sociopolitical risk |

Supplier |

External |

Political risk index |

|

6 |

Risk of supplier oversaturation |

Supplier (relationship) |

Internal and External |

Supplier saturation index |

|

7 |

Risk of territorial distance |

Supplier (relationship) |

Internal |

Territorial distance index |

|

8 |

Risk of incomplete technical specifications |

Material/Service |

Internal |

Technical specifications index |

|

9 |

Risk of obsolescence of material/service |

Material/Service |

Internal |

Obsolescence index |

|

10 |

Risk of revocation of import/export authorizations |

Contract |

Internal |

Index of revocation of authorizations |

Once the final value for each measure is calculated, the results must be standardized so that they can be interpreted from a systemic perspective. The measures in question may be based on different calculation algorithms and rely on heterogeneous measurement scales. Some algorithms, for example, extract specific data from reports or databases and return values expressed as percentages or numerical scores on discrete scales (e.g. from zero to three). Others, by contrast, produce results in the form of qualitative judgments on ordered levels (such as low–medium–high) or as dichotomous variables (yes/no). Therefore, it is essential to translate these different scales into a single representation: a degree of risk expressed on a percentage scale ranging from zero to one hundred, with a consistent orientation of interpretation (that is, clearly establishing whether higher values correspond to higher (or lower) levels of risk).

Once the representation of the different risk elements is standardized, the next step is to define the aggregation logic for the measures associated with the items under analysis. This is essential in order to obtain a concrete view of aggregate risk along the selected dimensions, which, in the project under consideration, correspond to the item’s supplier and material/service. Aggregation may be performed through mathematical functions such as simple averages or, preferably, weighted averages. The latter approach is particularly appropriate because greater relevance can be assigned to the risk elements that have a stronger impact on the overall risk profile of the object in question.

Finally, after the analytical risk is calculated, now comes the moment to establish the synthesis approach that will be used to derive a systemic risk indicator that accurately reflects the function’s level of exposure. While the preceding steps allow for the analytical measurement of risk at the level of individual control objects, this final phase aims to construct an overall system-level rating. Two main activities are required to achieve this objective. The first concerns weighting risk items, that is, assigning differentiated weights based on their relative importance, following a logic analogous to that applied in aggregating risk elements inherent to individual control objects. The second involves setting risk thresholds (cut-offs) and alert mechanisms. The former serves to distinguish between acceptable and critical levels of risk, while the latter makes it possible to trigger specific alerts based on clearly defined conditions for a limited subset of risks. This mechanism tells managers to direct their attention to situations that may initially appear to entail low risk, but that in fact conceal elements that are potentially perilous for the system.

The outcome of this process is a systemic risk index: a synthetic and easily interpretable metric that not only provides a snapshot of the current status of the function but also serves as a valuable tool to support strategic decision-making and governance processes.

Testing and validating the model

After the risk measurement model is constructed, the final essential phase prior to validation is testing. The objective here is to verify the reliability of the proposed framework by comparing the results generated by the model with users’ perceptions and their actual operational experience. To this end, in collaboration with the ELT working group, two programs were selected that were particularly sensitive to the risks associated with the control items under analysis. In choosing these programs, managers could assess whether the evidence produced by the model was consistent with what they observe in their work, providing concrete feedback on the robustness of the tool.

Starting from the identification of risk items and the associated metrics, the testing phase revealed several critical issues. In some cases, locating the documentation required to calculate specific metrics proved particularly complex and time-consuming; in others, multiple metrics relied on similar data collection methods, with calculation algorithms that failed to produce sufficiently reliable and representative results for the risk items in question. By testing the metrics, the working group could integrate the theoretical assessment of their feasibility with an evaluation of their practical applicability. This ultimately led to a rationalization process: on the one hand, risk items measured through comparable metrics were consolidated; on the other, items were excluded when the necessary documentation was not systematically or reliably available.

While testing the two programs, what also emerged was the need for further reflection on the implications of the extended scope of risk detection. It became evident that, with reference to the material/service under investigation, the status of the order (for instance, whether it was in the process of being fulfilled or was already out for delivery) directly influenced the activation of specific risk indicators. Such activation depends both on the relevance of detecting intrinsic risk at a given point in time and on the availability of the information required at that stage of the process.

In summary, the testing phase proved instrumental in revealing certain operational limitations of the initial theoretical design of the risk measurement system. On the basis of this evidence, the working group was able to validate a concrete, upgraded model capable of providing a more realistic representation of risk exposure and effectively revealing potential areas of risk for the function.

Measuring risks: A complex necessity

The initial premises and the business case presented above are intended to illustrate a possible methodology for developing a risk measurement system, while acknowledging the concrete need for effective business management. At the same time, these contributions highlight how the construction of such a system cannot be reduced to a purely technical exercise. Rather, it entails a complex process of design, methodological refinement, operational experimentation, and gradual dissemination of risk measurement as a component of corporate culture. This is an inherently time-consuming process that, beginning with the development of the measurement tool, progressively evolves into a full-fledged managerial practice.

From this perspective, measurement represents only the first step. Risk metrics, together with warning and alert mechanisms, must inform mitigation choices, starting from a structured simulation of the impacts that each decision will inevitably have on the configuration of current and prospective corporate results. Measurement activates thresholds of attention, supports evaluation, and guides informed decisions and behaviors. In this sense, measurement constitutes just one stage in a broader and more complex organizational learning cycle.

References

- Benedek, P., Bognár, F. (2024). “Compliance risk assessment: Results of a comprehensive literature review.” Acta Polytechnica Hungarica, 21(6).

- COSO (2017). Enterprise risk management: Integrating with strategy and performance. Durham, NC: American Institute of Certified Public Accountants (AICPA).

- Damodaran, A. (2007). Strategic risk taking: A framework for risk management. Wharton School Publishing.

- Dossi, A. (2001). I processi aziendali: Profili di misurazione e controllo. Milano: Egea.

- Kahneman, D., Sibony, O., Sunstein, C.R. (2021). Rumore: Un difetto del ragionamento umano. Milano: UTET.

- Kaplan, R.S., Mikes, A. (2012). “Managing risks: A new framework.” Harvard Business Review.

- Mustapha, A.M., et al. (2020). “A systematic literature review on compliance requirements management of business processes.” International Journal of System Assurance Engineering and Management, 11.

- Nielsen, C. (2023). “ESG reporting and metrics: From double materiality to key performance indicators.” Sustainability, 15(24).

[1] We would like to thank Luca Dessalvi (Vice President of Sourcing and Supply Management at the ELT Group) for his support in compiling this case study.

[2] Please note that the figure is provided for illustrative purposes only. For confidentiality reasons, it was not possible to fully disclose the risk items used by ELT.

[3] Please note that the table is provided for illustrative purposes only. For confidentiality reasons, it was not possible to fully disclose the risk items used by ELT.

Photo iStock / Tanatpon Chaweewat